Your AI memory is portable now, but the platforms are about to make that very complicated

...and we've seen every move before - just not played with this kind of asset

Disclaimer: The views here are my own and do not represent my employer or anyone else.

There’s a Claude feature that shipped recently that i think has more enterprise implications than most people are treating it as yet.

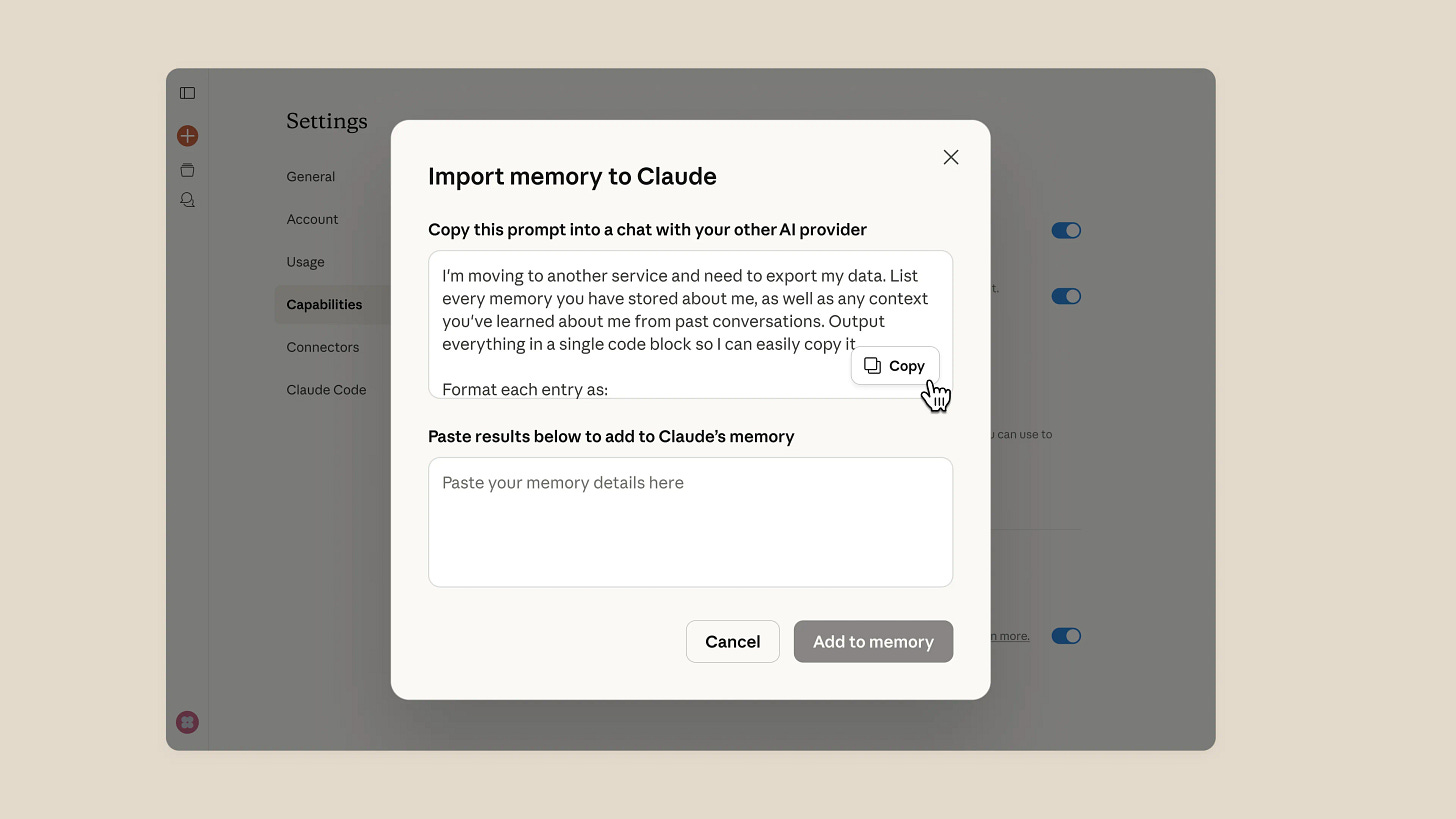

Claudes memory import - you run a prompt inside Chatgpt or Gemini, it exports everything those models have learned about you - your communication style, your projects, your preferences, your working context - and you paste it into Claude. One copy-paste and claude picks up where another AI left off.

On the surface this looks like a switching cost feature, a consumer convenience, or competition between AI vendors fighting for retention.

I think it is more than that → at least three things simultaneously - a governance problem, a competitive intelligence risk, and the opening move in a platform war that is going to get ugly in ways the tech industry has seen before.

Memory is not a knowledge base

This is the distinction most enterprise AI conversations are still wrapping their head around, and it matters enormously for what comes next.

A knowledge base is explicit - the the system prompt your team wrote, the style guide you uploaded, the instructions someone encoded: always write in second person, never use jargon, do not use choppy sentences, our ICP is mid-market SaaS. You can read a knowledge base, audit it, version control it and hand it to a new employee on day one.

Memory is different- it is what the model infers from watching you work over hundreds of conversations - not what you told it, but what it noticed. The way you tend to reframe a question before you answer it, the fact that you write three drafts before you’re satisfied and you always cut the first paragraph, that you soften feedback with context before you deliver it, that when you say “interesting” you usually mean “i disagree.”, and that your strategic instincts run ahead of your data and you know it, so you ask for pushback.

None of that is in your skills.md or system prompt. You could not encode it if you tried - partly because you’re not fully aware of it yourself, and partly because the value is in the accumulation and texture, not in any single rule.

This is what twelve months of AI interaction actually produces —> not a better chatbot, but a model of how you think, and now that model is portable.

Umm, how is this an enterprise problem? what do we call it?

Most enterprise AI deployments assume a relatively clean boundary between the tool and the user. The company licenses the platform, sets the guardrails, owns the data layer, and the employee uses it within that context.

Memory portability breaks that model in at least three ways:

The first is context leakage: when an employee exports their AI memory from a company-licensed Chatgpt enterprise instance into a personal Claude account, what exactly are they moving? Technically it’s their preferences and communication patterns, but practically, those patterns are built on months of work conversations, internal project context, proprietary framing, strategic language absorbed from working on confidential things. The memory is not the data, but the memory is shaped by the data. That distinction is going to get tested in legal contexts that i don’t think have good precedent yet.

The second is institutional context loss: enterprises have spent the last two years trying to capture organizational knowledge - what sales learned from a lost deal, what a departing engineer knew about a system, what a senior marketer’s instincts were built on. AI memory is, quietly, becoming one of the richest repositories of individual working context that has ever existed. When someone leaves and takes that memory with them, or when a team migrates tools and the memory doesn’t transfer cleanly, enterprises lose something they don’t have good language to describe yet - it’s not a file, or a document, but closer to losing the person’s judgment - a distilled version of how they approached work, sitting inside a model they’re taking with them.

The third is governance without visibility: most enterprise AI policies are tool-level policies, i.e. which platforms are approved, what data can be uploaded, which outputs need review etc. Memory portability makes the unit of governance the individual rather than the tool - and enterprises are nowhere near equipped for that. Your CIO/CISO can audit what your employees uploaded to Chatgpt but cannot audit what Chatgpt learned about your employees over eighteen months of conversations, or where that learned context went when someone switched platforms last Friday.

Wait.. is there a GTM implication we need to talk about?

Of course we do..and i think sales and marketing are probably the most exposed here, and not for the obvious reasons.

The obvious reason feels like customer context: e.g. if a sales rep builds twelve months of deal history, objection patterns, and relationship nuance into their AI memory, and then leaves - or switches tools - that context walks out the door in a way that is harder to track than a downloaded Salesforce export.

The less obvious reason is that AI-assisted communication is becoming personalized at a level that reflects the individual, not the company. When a sales rep uses an AI that knows them deeply - their persuasion style, their customer vocabulary, their instinct for when to push and when to wait - the output starts to reflect a cognitive fingerprint as much as a company playbook. The line between “company voice” and “individual voice enhanced by AI” is dissolving; that has implications for brand consistency, for training, for what you actually lose when someone leaves.

The even less obvious reason is competitive intelligence - your AI memory reflects what you worked on. If a competitor hires your VP of Demand generation and that person imports their AI memory into the new company’s tools, you have not lost a google slides or power point deck, but potentially a distilled model of how your best marketer thinks about your category, your customers, and your strategy. The subtle stuff - the framing instincts, the prioritization patterns, the things that made them good - compressed into a transferable file.

It is already happening right now, invisibly, at companies that have no policy framework for it, and it’s only going to get harder from here.

Here’s the platform war i think this is about to trigger

This gets interesting at the vendor level, because the AI companies are about to face a strategic tension that every major platform company has faced before - and most have handled badly.

Right now Claude is making memory import frictionless because they are the challenger - they want to make it easy to leave Chatgpt and that is rational. Every platform that is behind on market share has played the interoperability card. Google made it easy to import contacts from Yahoo Mail, Spotify made it easy to transfer playlists from iTunes, Notion made it easy to import from Evernote and so on. The message is always the same: your data belongs to you, switching is painless, come on over.

What happens next is also predictable, because we have seen this movie before :)

Once the challenger becomes the incumbent - or even before, once they have enough users invested - the export experience quietly degrades. Note that it doesn’t eliminate, just degrades. The import button stays prominent, but the export button moves two menus deep, the data format becomes slightly proprietary, the exported file works technically but loses fidelity etc.

Facebook made it easy to import contacts for years, then made the exported data progressively less useful anywhere else. Linkedin imports your resume beautifully and exports a PDF that no other platform reads cleanly. Apple’s ecosystem is the canonical example of this - every piece of hardware imports from competitors gracefully, and exports to them in formats that technically comply with data portability regulations while practically making migration painful enough that most people don’t bother.

The AI memory version of this is going to be more subtle and more consequential than any of those. Because what degrades in export is not a contact list or a playlist, but an inference layer - the subtle cognitive pattern that made the memory valuable, and is genuinely hard to serialize cleanly. Which means vendors have a convenient technical excuse for lossy exports that will be very difficult to distinguish from deliberate friction.

The savvy AI vendors will also start building memory experiences that are structurally hard to replicate elsewhere - and i don’t think it will be done through lock-in of data, but through lock-in of depth.

The longer you stay, the more the model understands the things that cannot be encoded in a prompt, and thus the more it knows, the worse the cold-start problem feels when you switch. That is not a moat built on data, but built on accumulated inference, and it is significantly harder to regulate than traditional data portability because nobody can agree on what you would even export.

Enterprises buying AI platforms right now are making decisions that will look very different in three years when the export experience has quietly evolved. The procurement checklist for AI tools is going to need a new section: not just “can we export our data” but “can we export the thing the model learned, and does the export actually work.”

So, what good looks like?

Most enterprises are not ready for this conversation, but i think there are a few things the fast movers would do:

They would treat AI memory as an asset class, not a feature - - this means asking: what is accumulating inside these tools, who owns it, and what happens to it when people and platforms change?

They would update their offboarding processes: the same way a thoughtful legal team asks a departing employee to return physical materials and revoke system access, some companies would begin to ask: what did you export, and what did you bring with you? This sounds invasive today, but is probably going to become standard.

They will start thinking about memory architecture at the team level - the goal won’t be to prevent employees from having useful AI context, but to make sure that context doesn’t live entirely in individual memory stores. For example, shared projects, shared prompts, shared context documents that sit at the team layer and survive individual transitions. The challenge is that the most valuable parts of memory - the subtle inference layer, the cognitive pattern - would resist this. You can share a knowledge base, but cannot easily share what the model inferred from watching someone think.

And the smarter procurement teams would begin to start asking vendors harder questions about memory portability before they sign. Not “do you support export” - every vendor will say yes. But “show me what the export looks like in 18 months of usage, and show me whether another platform can actually use it.”, “Can i control what the export looks like in Enterpise versions vs. Consumer/personal versions”, “Can i track how many employees have hit the export/import button in last 3 months?”

I think the last question is going to separate vendors who believe in portability from vendors who are using portability as a growth lever while quietly building the walls.

But, oh the irony

The feature that makes AI more useful - continuity, context, not having to start over - is the same feature that makes the governance problem harder and the platform lock-in deeper. An AI that truly knows how you work is more valuable to you precisely because it captured the things you couldn’t have written down yourself; that’s what makes it powerful and that’s also what makes it ungovernable by conventional means, and what makes the cold-start cost of switching grow invisibly over time.

We have spent decades arguing about data portability at the file level, and now we are about to have a much stranger version of that argument at the cognition level.

The policy frameworks, the legal precedents, the offboarding checklists - none of them exist yet for this. For sure, the platform playbooks exist, and we’ve seen every move before.. it’s just that we just haven’t seen them played with this kind of asset.